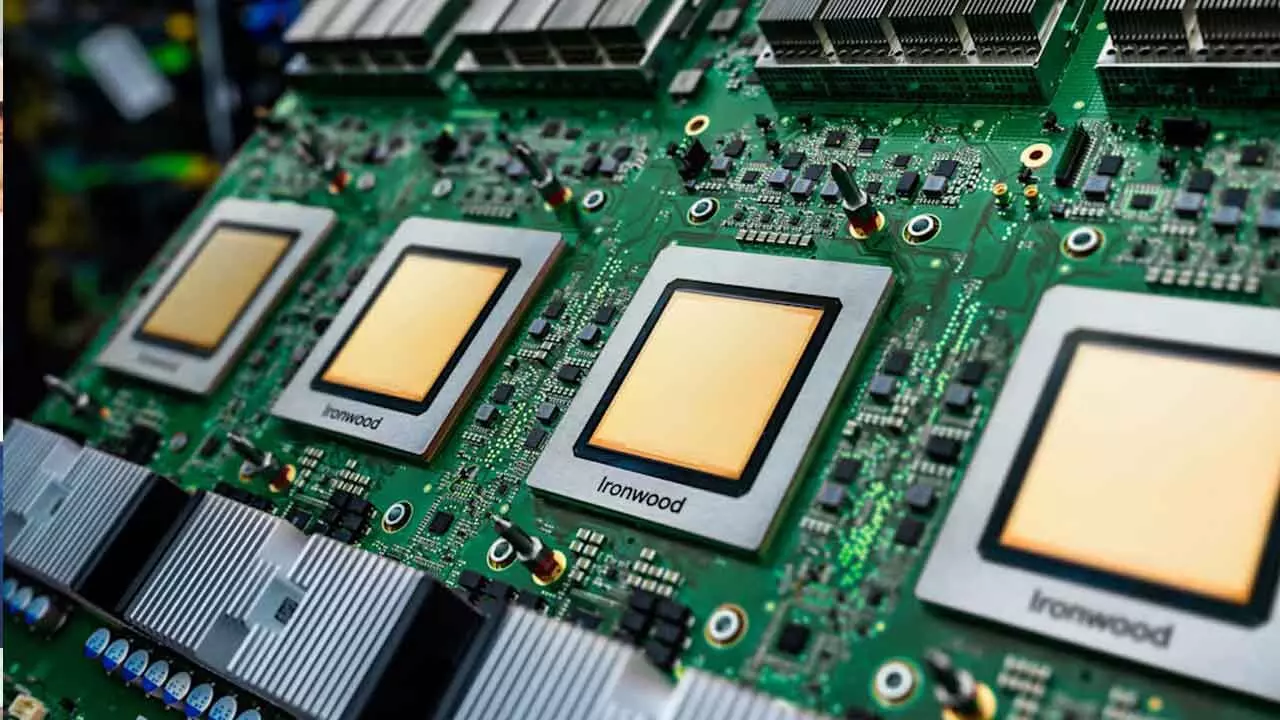

Tensor processing units (TPUs), Google Cloud’s eighth generation of specially designed AI chips, will be divided in half, the company revealed on Wednesday. The TPU 8t chip will be used for model training, while the TPU 8i chip will be used for inference.

The continuous application of models, or what occurs after users input cues, is known as inference.

As one might anticipate, the company boasts some amazing performance specifications for these new TPUs in comparison to the earlier generations: up to three times faster AI model training, 80% greater performance per dollar, and the capacity to integrate more than a million TPUs into a single cluster. In comparison to earlier iterations, this should result in a lot more computation for a lot less energy and expense to customers. Since its unique low-power chips were initially called Tensor, it refers to these chips as TPUs rather than GPUs.

However, at least not yet, Google’s processors are not a direct challenge to Nvidia’s future. Google is utilizing these chips to augment the Nvidia-based systems it offers in its infrastructure, just like the other massive cloud providers like Microsoft and Amazon. It won’t completely replace Nvidia. In fact, Google claims that later this year, Vera Rubin, Nvidia’s newest chip, will be available on the cloud.

As businesses shift their AI requirements to the cloud and transfer their programs to these processors, hyperscalers like Amazon, Microsoft, and Google that are developing their own AI chips may eventually become less dependent on Nvidia.

Even so, it’s not profitable to wager against Nvidia right now. When Google introduced its first TPU in 2016, renowned chip market analyst Patrick Moorhead joked on X that it might be terrible news for Nvidia (and Intel). That prognosis didn’t exactly hold up over time, as Nvidia is already a firm with a market capitalization of around $5 trillion.

Even though many workloads rely on Google’s chips, if all goes as planned, Google’s expansion as an AI cloud provider would increase rather than decrease business for the chip manufacturer.

Indeed, Google claims to have partnered with Nvidia to develop computer networking that will enable Nvidia-based systems to operate much more effectively in its cloud. Specifically, the two corporate behemoths are attempting to strengthen the software-based networking technology known as Falcon, which Google developed and made publicly available in 2023 through the Open Compute Project, the father of all open source data center hardware organizations.